Introduction to RAGs (Retrieval-Augmented Generation)

Retrieval-Augmented Generation (RAG) is one of the most important architectures in modern AI applications.

If you are building chatbots, internal AI tools, search assistants, or GenAI products for real users, understanding RAG is mandatory.

This article explains RAG from basics to reasoning, in simple English, with clear logic and examples.

1️⃣ What Is RAG?

RAG (Retrieval-Augmented Generation) is an AI approach where:

The system retrieves relevant information from external data

Then generates an answer using an LLM

The answer is grounded in real documents, not guesses

In one line:

RAG = Search + LLM

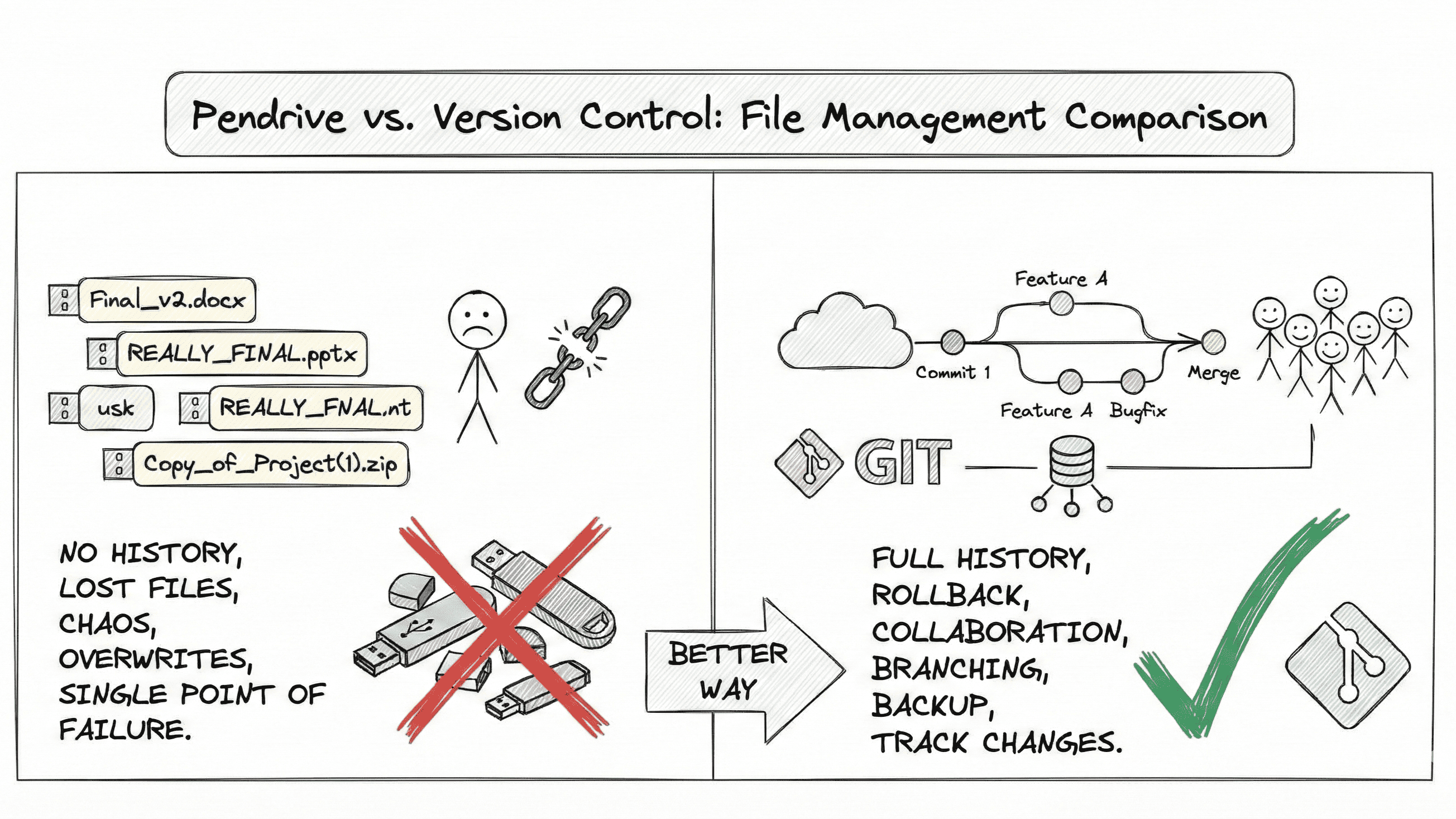

2️⃣ Why RAG Is Used

Large Language Models (LLMs):

❌ Don’t know your private data

❌ Don’t have real-time knowledge

❌ Can hallucinate (confident but wrong answers)

Example

User asks:

“What is our company’s leave policy?”

LLM without RAG:

“Most companies allow 20 days leave…” ❌ (hallucination)

LLM with RAG:

“According to your HR policy document, employees get 18 days leave.” ✅

This is why RAG exists:

Accuracy

Trust

Real-world usability

3️⃣ Why RAGs Exist (Core Reason)

RAG exists to solve three fundamental problems:

| Problem | Without RAG | With RAG |

| Hallucination | High | Very low |

| Private data access | Not possible | Fully possible |

| Data freshness | Outdated | Always up-to-date |

👉 RAG makes AI reliable enough for production.

4️⃣ How RAG Works (Retriever + Generator)

RAG has two main components:

🔍 Retriever

Searches relevant information from your data

Uses vector similarity search

Returns top-k matching chunks

✍️ Generator

LLM (GPT, Claude, etc.)

Takes retrieved content + user query

Generates final answer

Simple Example (Step-by-Step)

User question

“How do I reset my password?”

Step 1: Retriever

Searches help-docs

Finds a chunk:

“To reset your password, go to Settings → Security → Reset Password…”

Step 2: Generator

Combines:

User question

Retrieved text

Produces:

“You can reset your password by going to Settings → Security → Reset Password…”

👉 The LLM is not guessing, it’s using facts.

5️⃣ What Is Indexing in RAG?

Indexing is the process of preparing your documents so they can be searched efficiently.

Indexing includes:

Loading documents (PDFs, docs, DB, web pages)

Cleaning text

Chunking text

Vectorizing chunks

Storing them in a vector database

Why indexing is important:

Fast search

Accurate retrieval

Scalable performance

Without indexing → slow, inaccurate AI.

6️⃣ Why We Perform Vectorization

LLMs do not understand raw text like humans do.

They understand numbers.

Vectorization means:

Convert text into numerical vectors (embeddings)

Similar meanings → closer vectors

Different meanings → farther vectors

Example

“How to login?”

“How to sign in?”

Even though words differ, vectors are very close.

This allows:

Semantic search

Meaning-based retrieval

Not just keyword matching

7️⃣ Why RAG Uses Vector Databases

Vector databases (like Pinecone, Qdrant DB):

Store embeddings

Perform fast similarity search

Scale to millions of documents

They answer:

“Which pieces of text are closest in meaning to this question?”

This is the backbone of RAG retrieval.

8️⃣ Why We Perform Chunking

Documents are too large to send directly to an LLM.

Chunking means:

Splitting large documents into small pieces

Each piece becomes searchable

Why chunking is necessary:

LLM token limits

Better retrieval accuracy

Faster search

Reduced cost

Example:

100-page PDF → 500 small chunks

9️⃣ Why Overlapping Is Used in Chunking

Chunking introduces a problem:

- Important information may be split across chunks

Overlapping solves this

Example:

Without overlap

Chunk 1: “To reset your password, go to Settings”

Chunk 2: “→ Security → Reset Password”

Meaning is broken ❌

With overlap

Chunk 1: “To reset your password, go to Settings → Security”

Chunk 2: “Settings → Security → Reset Password”

Meaning is preserved ✅

Why overlap is important:

Maintains context

Prevents incomplete answers

Improves retrieval quality

10️⃣ When Should You Use RAG?

Use RAG when:

You have private documents

You need accurate answers

Data changes frequently

Hallucinations are unacceptable

Examples:

Internal company chatbots

Customer support AI

Legal / HR assistants

Knowledge base search

Developer documentation bots

Final Summary

RAG = Reliable AI

🔹 LLMs generate text

🔹 RAG grounds them in real data

🔹 Vectorization enables semantic search

🔹 Chunking + overlap preserve meaning

🔹 Indexing makes everything fast and scalable

RAG doesn’t make the model smarter — it makes it truthful.