Understanding RAGs – From Chunking to Vectorization (With Real-Life Examples)

Overview

Ever heard of RAGs (Retrieval-Augmented Generation) and felt like it was rocket science? Don’t worry — in this blog, we’ll break it down using real-world analogies so even a non-techie can understand.

We’ll explain:

What is Indexing?

Why Vectorization is crucial

Why RAGs even exist

What is Chunking?

Why do we overlap chunks?

Whether you're a student, content creator, or developer — this one's for you.

What is Indexing?

Analogy: Imagine running a mobile accessories shop in Bengaluru.

Every product you have — from chargers to phone covers — is labeled and stored in shelves. That’s how you find items quickly.

Similarly, indexing in RAGs helps the AI quickly find the most relevant information from your documents, just like a catalog.

Without indexing, AI would have to “read the entire shop” every time you ask a question.

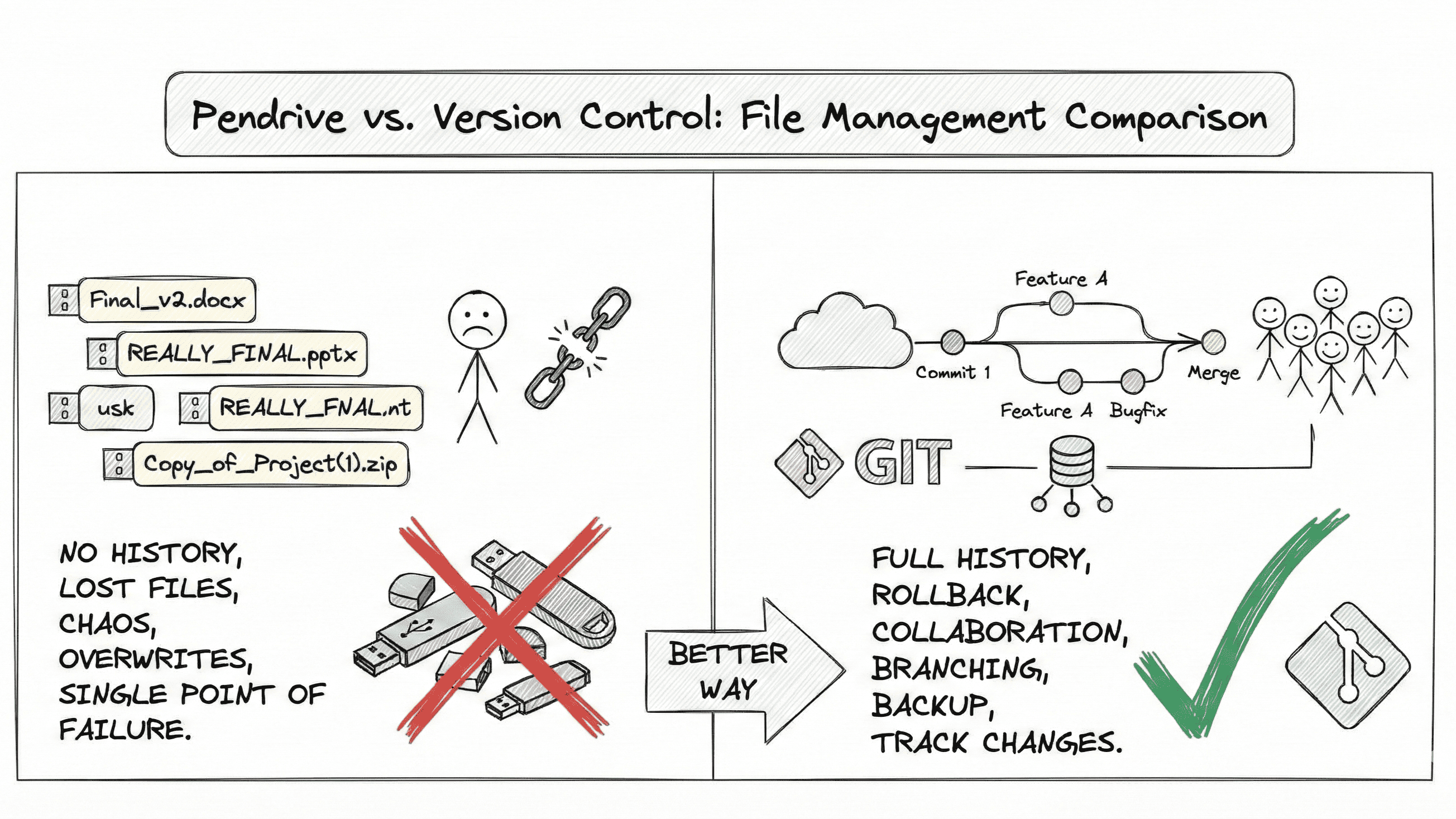

Why Do RAGs Exist?

Generative AI like GPT is trained on tons of data, but it doesn’t know your private data — like your PDFs, website, documents, or helpdesk.

RAG = Retrieval + Generation

Retrieves the most relevant chunks from your own data (e.g., docs, FAQs)

Generates an answer using GPT with that data as context

🧾 RAG bridges the gap between AI's general knowledge and your specific data.

Why Do We Perform Vectorization?

Analogy: A customer enters your mobile shop and says:

“Give me the best 5G phone under ₹15,000 with good battery.”

To understand the intent, your assistant has to go beyond words — they need to understand the meaning.

In AI, vectorization turns words/sentences into numerical meaning (vectors) so the AI can search semantically.

💡 Example:

“battery backup” and “long-lasting power” mean similar things

Their vector representations will be closer in the embedding space

pythonCopyEditfrom sentence_transformers import SentenceTransformer

model = SentenceTransformer('all-MiniLM-L6-v2')

vectors = model.encode(["good battery", "long-lasting power"])

print(vectors)

This allows semantic search, not just keyword match!

Why Do We Perform Chunking?

Problem: AI models like GPT have a token limit. You can’t feed them an entire book or 100 pages of docs.

Solution: We split the documents into smaller parts = chunks.

Analogy: When you get a new stock catalog for your mobile shop, you don’t memorize it all at once. You break it into:

Samsung Phones

iPhones

Chargers

Earphones

That’s chunking!

Why Do We Overlap Chunks?

Imagine two mobile models are mentioned at the end of one page and the start of another in your catalog. If you split strictly by page, AI might miss context.

So we add overlapping chunks:

Chunk 1: Info A, B, C

Chunk 2: Info C, D, E

This way, context is preserved even if information is split across parts.

pythonCopyEditdef chunk_with_overlap(text, chunk_size=200, overlap=50):

chunks = []

start = 0

while start < len(text):

end = start + chunk_size

chunks.append(text[start:end])

start += chunk_size - overlap

return chunks

Summary Table

| Concept | Analogy in Mobile Shop | Purpose in RAGs |

| Indexing | Labeling items in shelves | Quick retrieval of chunks |

| Vectorization | Understanding customer intent | Search by meaning, not just keywords |

| RAGs | Staff + Catalog + Answer | Combines private info + LLMs |

| Chunking | Splitting catalog by category | Fit data into LLM context window |

| Overlap | Repeating edge info | Preserve full meaning across chunks |

✅ Final Thought

RAGs are not just tech jargon.

They’re smart ways of making AI feel more like a human expert — one who knows your data and responds like a pro.

Start simple, play with chunking/vectorization tools, and soon you’ll be building your own knowledge-enhanced AI apps!